Why TikTok’s ‘Immigration Status’ Privacy Language Is Raising Alarms — and What It Really Means

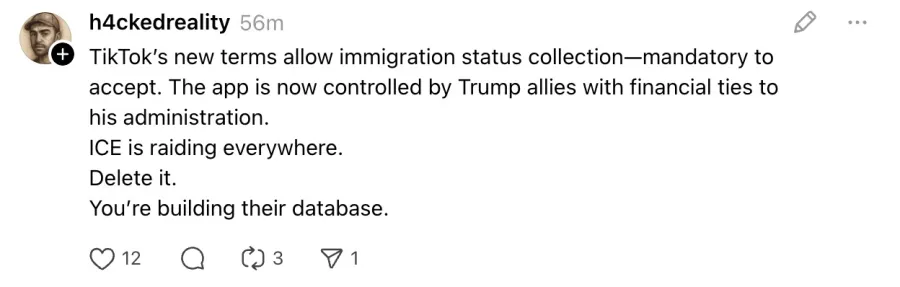

When TikTok users across the United States began receiving in-app notifications about changes to the platform’s privacy policy, the reaction was swift and emotional. Social media timelines quickly filled with screenshots, warnings, and alarmist interpretations of the revised language, particularly sections referencing the potential collection of highly sensitive personal information.

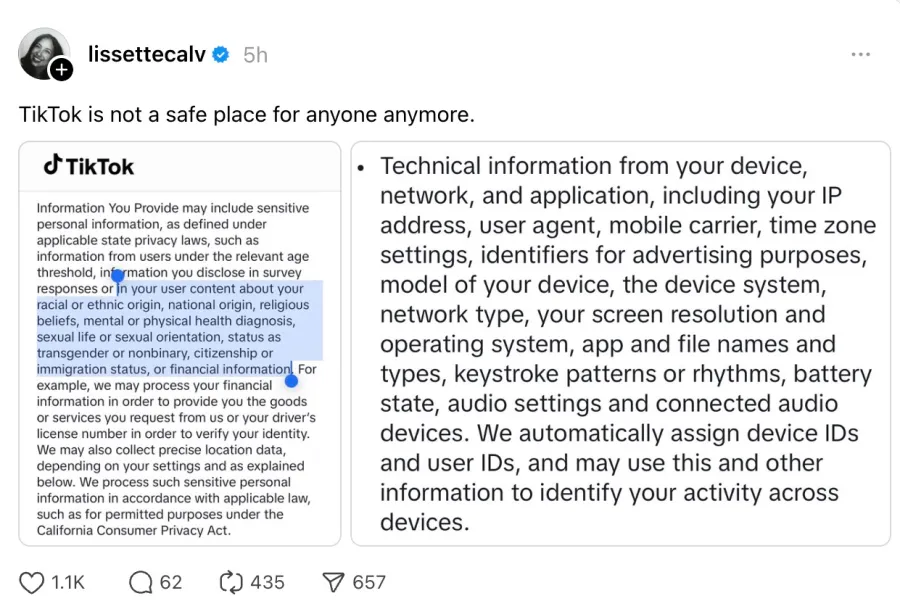

At the center of the uproar was a clause stating that TikTok may process data related to users’ “sexual life or sexual orientation, status as transgender or nonbinary, citizenship or immigration status,” among other deeply personal categories. For many Americans, reading this language — especially amid an already tense political and social climate — triggered immediate concern. Some users openly questioned whether the app was preparing to surveil vulnerable communities, while others threatened to delete their accounts altogether.

Yet a closer examination reveals that this language is neither new nor unique to TikTok — and, crucially, it does not mean what many users fear.

A Policy Change That Wasn’t Really a Change

Despite the intensity of the reaction, the controversial wording predates TikTok’s recent U.S. ownership restructuring. Nearly identical language appeared in the company’s privacy policy as early as August 19, 2024, well before the joint venture deal that transferred TikTok’s U.S. operations to a domestically governed entity.

What has changed is timing and visibility. Because the ownership transition required the creation of a new legal entity, TikTok was obligated to notify users and re-present its terms and privacy documentation. For many users, this was the first time they had ever read the policy in detail — and encountering blunt legal language without context proved jarring.

In reality, the revised policy largely reflects compliance with evolving U.S. state privacy laws, particularly in California, rather than a shift toward more aggressive data collection.

The Legal Backdrop: Why the Language Is So Explicit

To understand why TikTok’s policy reads the way it does, it’s necessary to examine the regulatory environment governing data privacy in the United States.

Laws such as the California Consumer Privacy Act (CCPA) and its successor, the California Privacy Rights Act (CPRA), impose strict disclosure requirements on companies that collect or process personal data. Under these laws, businesses must clearly inform users if they collect what the statutes define as “sensitive personal information.”

That definition is expansive and includes:

-

Precise geolocation data

-

Racial or ethnic origin

-

Religious or philosophical beliefs

-

Health diagnoses

-

Biometric and genetic data

-

Sexual life or sexual orientation

-

Citizenship or immigration status

Notably, citizenship and immigration status were explicitly added to the list of protected sensitive information in 2023, when California Governor Gavin Newsom signed Assembly Bill 947 into law.

Because of these legal requirements, companies are often advised to list sensitive data categories explicitly — even if they are only collected incidentally, such as when users voluntarily share personal experiences in posts, videos, comments, or surveys.

“Telling users exactly what could be collected is not optional under these laws,” explains Jennifer Daniels, a partner at law firm Blank Rome specializing in regulatory and corporate compliance. “If a platform might process sensitive information, even indirectly, it must disclose that possibility.”

Content, Not Surveillance

One of the most misunderstood aspects of TikTok’s policy is the source of the sensitive data it references. The policy does not suggest that TikTok actively seeks out users’ immigration status, sexual orientation, or health conditions through covert tracking or profiling.

Rather, it acknowledges that such information may appear in user-generated content.

If a creator posts a video discussing their mental health journey, immigration experience, or gender identity, that information becomes part of the content hosted on the platform. From a legal standpoint, hosting and processing that content constitutes “collection,” even if the platform did nothing more than provide the space for the user to speak.

“TikTok is essentially saying that if you choose to disclose something sensitive, the platform processes that content like any other,” says Ashlee Difuntorum, an associate at KHIKS with experience representing technology companies. “The policy sounds alarming because it’s written for regulators and litigators, not everyday users.”

Litigation, Not Curiosity, Drives Disclosure

Another key factor behind TikTok’s detailed disclosures is the growing threat of privacy-related litigation. According to Philip Yannella, co-chair of Blank Rome’s Privacy, Security, and Data Protection Practice, companies have increasingly faced legal demands under laws like the California Invasion of Privacy Act (CIPA).

“In recent cases, plaintiffs’ attorneys have alleged unlawful collection of racial, ethnic, or immigration-related data,” Yannella notes. “Companies respond by making their disclosures as comprehensive as possible to limit liability.”

In other words, specificity is a defensive legal strategy — not evidence of expanded surveillance.

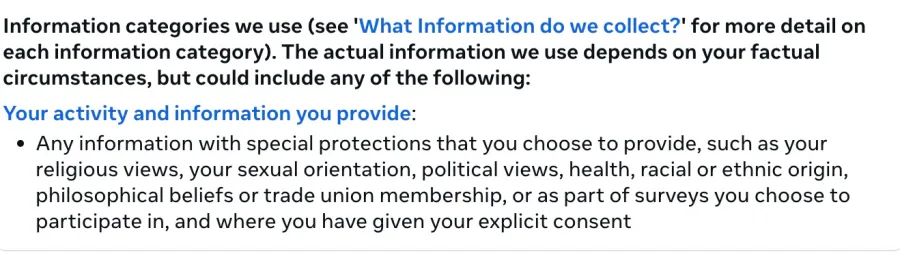

Not Just TikTok: An Industry-Wide Practice

While TikTok’s policy has drawn particular scrutiny, similar disclosures appear across the social media landscape. Meta, Google, and other major platforms also acknowledge that they may process sensitive personal information, though some choose broader phrasing rather than enumerating every legally defined category.

Meta’s privacy policy, for example, discusses sensitive data in detail but stops short of explicitly naming immigration status. Legal experts note that these stylistic differences reflect corporate risk tolerance rather than substantive differences in data practices.

Ironically, greater transparency can sometimes create more fear.

“Spelling everything out can make policies less digestible for users,” one privacy attorney told TechCrunch. “But from a compliance standpoint, it’s often safer.”

Political Climate Amplifies Anxiety

The public response to TikTok’s policy cannot be separated from the broader political environment in the United States.

Immigration enforcement has intensified under the Trump administration, sparking widespread protests and civil unrest. In Minnesota, tensions reached a breaking point as residents clashed with Immigration and Customs Enforcement (ICE) agents. The unrest culminated in an economic blackout, with hundreds of businesses closing in protest, following thousands of arrests and the death of American citizen Renée Good.

Against this backdrop, any mention of “immigration status” in a data policy feels ominous — particularly for undocumented individuals, LGBTQ+ communities, and political activists.

It is therefore unsurprising that users interpreted TikTok’s language through a lens of fear, imagining worst-case scenarios involving government access to personal data.

The Irony of TikTok’s U.S. Transition

There is a deep irony underlying the current panic. TikTok’s move to place its U.S. operations under American ownership was driven by concerns that Chinese laws could compel ByteDance to share user data with Beijing.

Chinese legislation, including the 2017 National Intelligence Law and the 2021 Data Security Law, requires companies to assist state intelligence efforts. U.S. lawmakers feared this could expose American users to surveillance or algorithmic manipulation.

Now, however, public anxiety has shifted inward. Many Americans are less worried about China accessing their data than about their own government potentially doing so.

This reversal underscores a broader truth: distrust of institutions — corporate or governmental — has become a defining feature of the digital age.

What the Policy Actually Promises

Importantly, TikTok’s policy repeatedly states that any processing of sensitive personal information will occur “in accordance with applicable law.” The document explicitly references the CCPA and other state statutes, emphasizing user rights to access, limit, or delete certain data.

The policy does not claim that TikTok sells or freely shares sensitive information with law enforcement or government agencies. Like other U.S. companies, TikTok would be required to respond to lawful requests — but that obligation exists regardless of ownership structure.

Panic vs. Reality

The current wave of concern appears to be driven less by new risks and more by newly noticed language. For many users, the realization that social platforms legally “collect” the content they post — including deeply personal stories — is uncomfortable, even if it has always been true.

Social media has long encouraged radical openness while obscuring its legal and technical underpinnings. When those realities surface in dense legal prose, the illusion of privacy cracks.

The Bigger Question

Ultimately, the TikTok controversy highlights a larger issue: the gap between how users feel about digital privacy and how data systems actually function.

Platforms are required to explain themselves in legal terms, not emotional ones. Users, meanwhile, are navigating an era of political polarization, surveillance anxiety, and diminishing trust.

The result is a collision — not of policy changes, but of perception.

TikTok’s privacy language may be blunt, unsettling, and poorly timed. But it is not evidence of a new surveillance regime. Instead, it is a reflection of a regulatory system struggling to catch up with how people share their lives online — and a society increasingly aware of the costs of doing so.