Nvidia Aims to Become the Android Platform for General-Purpose Robotics

Nvidia has made one of its most ambitious moves yet toward redefining the future of robotics, unveiling at CES 2026 a comprehensive stack of robot foundation models, simulation platforms, and edge hardware designed to accelerate the rise of generalist robots. The announcement signals Nvidia’s clear intention to position itself as the default operating platform for robotics in much the same way Android became the backbone of the global smartphone ecosystem.

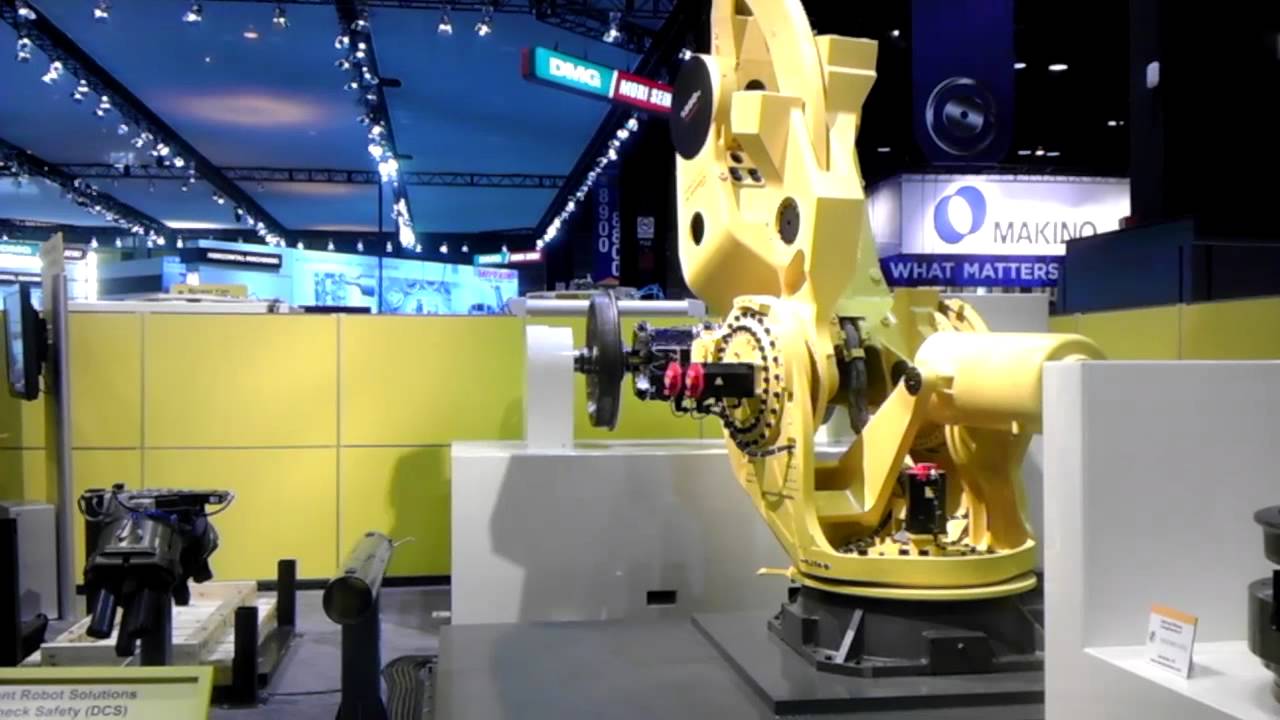

At the heart of this strategy is a recognition that artificial intelligence is rapidly moving beyond the cloud and into the physical world. As sensors become cheaper, simulation environments more realistic, and AI models more capable of reasoning and generalizing across tasks, robots are beginning to transition from narrowly programmed machines into adaptive systems that can learn, plan, and act in complex environments. Nvidia’s latest announcements aim to provide the foundational infrastructure required to make that transition scalable, safe, and accessible.

From Cloud AI to Physical Intelligence

For more than a decade, Nvidia has been synonymous with the rise of modern AI, supplying the GPUs that power data centers, large language models, and generative AI systems. With its CES 2026 announcements, the company is extending that leadership into what it calls “physical AI” — intelligence that can perceive, reason about, and interact with the real world.

This shift reflects a broader trend across the technology industry. As AI systems mature, their value increasingly lies not just in generating text or images, but in controlling machines that operate in factories, warehouses, hospitals, homes, and public spaces. The challenge is that the physical world is messy, unpredictable, and expensive to experiment in. Training robots purely through real-world trial and error is slow, costly, and often unsafe.

Nvidia’s answer is a tightly integrated full-stack ecosystem that spans data generation, simulation, model training, deployment, and on-device inference. By owning each layer of the stack — from foundation models to edge hardware — Nvidia hopes to reduce friction for developers and accelerate the adoption of more capable, general-purpose robots.

A New Generation of Robot Foundation Models

Central to Nvidia’s announcement is a new suite of open foundation models for robotics, all made available through Hugging Face. These models are designed to move robotics beyond task-specific automation and toward systems that can reason, plan, and adapt across diverse scenarios.

Among the most significant releases are Cosmos Transfer 2.5 and Cosmos Predict 2.5, two so-called “world models” that play a critical role in synthetic data generation and policy evaluation. World models allow robots to learn about the structure and dynamics of the physical environment in simulation, dramatically reducing the need for costly real-world data collection.

Cosmos Transfer 2.5 focuses on generating high-quality synthetic data that mirrors real-world conditions, helping bridge the gap between simulation and reality. Cosmos Predict 2.5, meanwhile, enables developers to evaluate robot policies in simulated environments, testing how systems might behave under a wide range of conditions before they are ever deployed.

Complementing these world models is Cosmos Reason 2, a reasoning vision-language model (VLM) designed to let AI systems see, understand, and act in the physical world. Unlike traditional perception models that focus narrowly on object detection or scene recognition, Cosmos Reason integrates visual understanding with language and reasoning capabilities. This allows robots to interpret complex instructions, understand context, and make decisions based on both visual input and high-level goals.

Isaac GR00T and the Push Toward Humanoid Robots

Perhaps the most attention-grabbing component of Nvidia’s new stack is Isaac GR00T N1.6, the company’s next-generation vision-language-action (VLA) model built specifically for humanoid robots. GR00T represents Nvidia’s vision of a general-purpose robotic “brain” that can coordinate perception, reasoning, and action across an entire humanoid body.

GR00T relies on Cosmos Reason as its cognitive core, using it to interpret sensory input and plan actions. What sets it apart is its ability to unlock whole-body control, allowing humanoid robots to move, balance, and manipulate objects simultaneously. This is a significant step beyond earlier systems that treated locomotion and manipulation as largely separate problems.

By enabling coordinated, whole-body behaviors, GR00T opens the door to robots that can perform complex tasks such as assembling components, handling tools, navigating cluttered spaces, or assisting humans in dynamic environments. While fully autonomous humanoid robots remain a long-term goal, Nvidia’s work suggests that the underlying software foundations are beginning to fall into place.

Simulation as the Backbone of Robotics Development

Recognizing that real-world experimentation remains one of the biggest bottlenecks in robotics, Nvidia also introduced Isaac Lab-Arena, an open-source simulation framework hosted on GitHub. Isaac Lab-Arena serves as a critical pillar of Nvidia’s physical AI platform, providing a safe and scalable environment for training and validating robotic capabilities.

As robots take on more complex tasks — from precise object manipulation to intricate operations like cable installation — the cost and risk of physical testing increase dramatically. Mistakes can damage equipment, endanger humans, or require expensive downtime. Isaac Lab-Arena addresses this challenge by enabling developers to test, iterate, and benchmark robotic behaviors in highly realistic virtual environments.

The framework consolidates task scenarios, training tools, and widely used benchmarks such as Libero, RoboCasa, and RoboTwin into a unified platform. This is particularly significant in an industry that has long lacked standardized benchmarks and shared evaluation frameworks. By providing a common reference point, Isaac Lab-Arena could help accelerate progress and improve comparability across different robotic systems.

OSMO: Connecting the Robotics Workflow

To tie the ecosystem together, Nvidia is expanding the role of OSMO, its open-source command center for robotics development. OSMO functions as connective infrastructure, integrating the entire workflow from data generation and simulation to model training and deployment.

One of OSMO’s key strengths is its ability to operate seamlessly across both desktop and cloud environments. This flexibility allows developers to scale their workloads as needed, running lightweight experiments locally or leveraging cloud resources for large-scale training. By reducing the operational complexity of robotics development, Nvidia hopes to lower the barrier to entry for startups, researchers, and independent developers.

Edge Hardware Built for Physical AI

No robotics platform is complete without powerful on-device compute, and Nvidia used CES 2026 to introduce the Blackwell-powered Jetson T4000 graphics card, the newest member of its Thor family. The Jetson T4000 is positioned as a cost-effective upgrade for edge AI, delivering up to 1,200 teraflops of AI compute and 64 gigabytes of memory while operating within a 40- to 70-watt power envelope.

This combination of performance and efficiency is critical for robotics applications, where power, heat, and space constraints often limit what can be deployed on-device. By enabling advanced AI models to run directly on robots, the Jetson T4000 reduces reliance on cloud connectivity and latency-sensitive remote inference.

In practical terms, this means robots can react more quickly to their environments, operate in areas with limited connectivity, and maintain greater autonomy. For Nvidia, it also reinforces the company’s strategy of tightly coupling hardware and software to deliver optimized performance.

Deepening Ties with Hugging Face

A key component of Nvidia’s strategy is openness, and the company is deepening its partnership with Hugging Face to make robotics development more accessible. Through this collaboration, Nvidia is integrating its Isaac and GR00T technologies into Hugging Face’s LeRobot framework, allowing a broader community of developers to experiment with robot training without requiring expensive hardware or specialized expertise.

The partnership brings together two massive developer ecosystems: Nvidia’s estimated 2 million robotics developers and Hugging Face’s 13 million AI builders. By meeting developers where they already work, Nvidia increases the likelihood that its tools and models become the default choice for robotics experimentation.

As part of this effort, Hugging Face’s open-source Reachy 2 humanoid robot now works directly with Nvidia’s Jetson Thor chip. This allows developers to test different AI models and control strategies on real humanoid hardware without being locked into proprietary systems. The emphasis on interoperability and openness stands in contrast to more closed robotics platforms, and could prove decisive in attracting a diverse developer base.

?An Android Moment for Robotics

The broader ambition behind Nvidia’s CES 2026 announcements is clear: the company wants to become the foundational platform on which the next generation of robots is built. Just as Android provided a common operating system that enabled rapid innovation across the smartphone industry, Nvidia aims to offer a shared hardware-software stack that lowers costs, accelerates development, and standardizes key components of robotics.

There are early signs that this strategy is gaining traction. Robotics has become the fastest-growing category on Hugging Face, with Nvidia’s models leading in downloads and usage. At the same time, major robotics companies — including Boston Dynamics, Caterpillar, Franka Robots, and NEURA Robotics — are already using Nvidia’s technologies in their products and research efforts.

This growing adoption suggests that Nvidia’s combination of performance, openness, and ecosystem integration is resonating with both established players and emerging startups. If the company can maintain this momentum, it may succeed in shaping not just individual products, but the overall direction of the robotics industry.

Looking Ahead

While the vision of truly generalist robots capable of operating seamlessly in the human world remains a long-term goal, Nvidia’s CES 2026 announcements mark a significant step toward that future. By i